Some iOS apps are now in their 10th year. In the EyeSpyFX app portfolio we haven’t got any that old – but we have some 7, 8 and 9 year olds. One of our apps, “My Webcam” would have been over ten years old but we retired it two years ago. In fact that app would be in its 16th year had it survived. Before its iOS manifestation “My Webcam” had a life on Blackberry and before that as a Java app on Nokia and Sony Ericsson.

Apps get discontinued for lots of reasons, for example:

- Removed by the app store due to lack of updates

- Competition from other apps

- The app store closes

- The original reason for the app does not exist anymore

- No sustainable commercial rationale

- The app function is now an OS function included in standard software

- App is so bloated it is difficult and expensive to update

- App was built using a previous development environment and re-factoring it is not worth the cost

- The app gets subdivided into smaller apps

Memory of “My Webcam” prompts me to reflect on the life cycle of apps in a general sense. I wonder if you could go to the app store and do a filtered search by date of publication what the average app life would be. In ten years time will there be apps that are 20 years old?

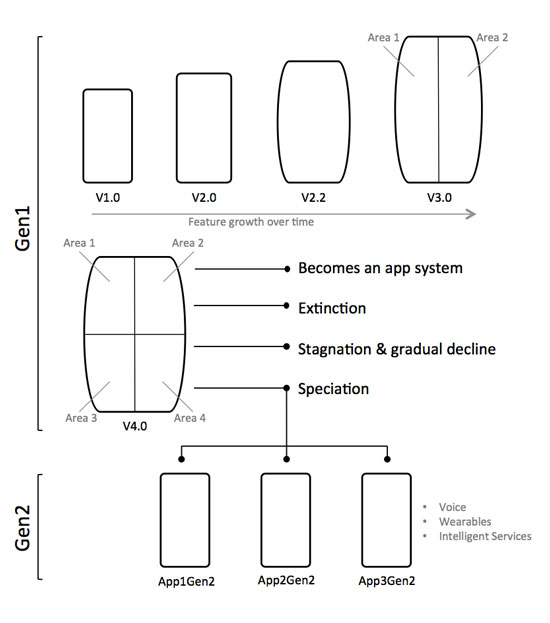

Our experience as developers is that as apps grow old they also grow bigger and then even bigger as more time passes and features get added.

There are some practical limits to app growth. Ultimately an app has to fit on a phone shaped screen and there is a limit to how many buttons you can fit in. If you keep adding functionality and features to an app year after year it inevitably becomes bloated. The bloated app – perhaps now resembling something more like “enterprise software” departs from the very concept of an app: “a small neatly packaged part of the internet”.

So why do apps grow? Top reasons include:

- We don’t want to have lots of apps – we just want one app so our customers find it easy to choose

- The PC interface does this – so the app should do it as well

- The UI on the product does it – so the app should do it as well

- The user base is growing and we need to give the users new stuff

- Some of our customers are specialised/power users and we need to support them.

These are good corporate reasons but they strain the app design and tends to forget about the plain old user who wants the app to do the one thing the app name/icon suggests.

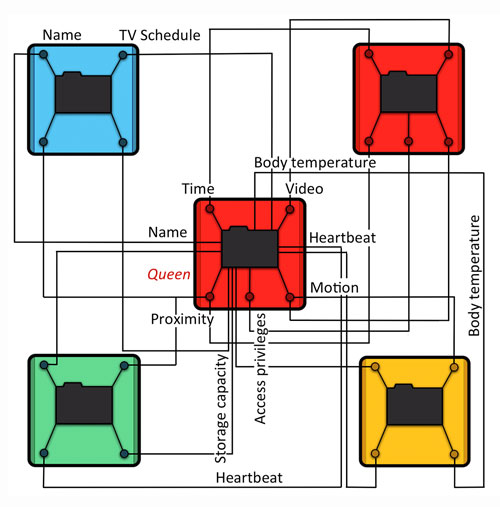

Serving everybody also does a disservice to the specialised power user. They come to the app with their special requirement but find their special feature located down the back in an app whose whole design and evolution serves a more general use case.

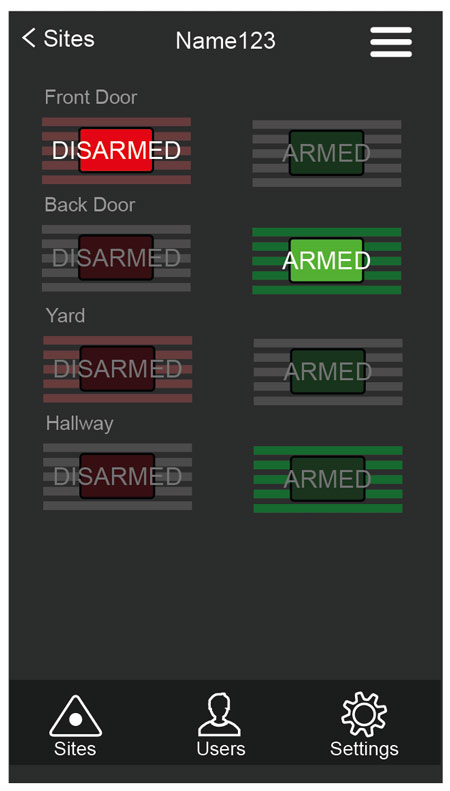

Rethinking a mature app into separate apps enables the company to specifically serve user requirements, for example; to open the door, to view the camera, check the logs, to disarm the system, to view the recording from 2 weeks ago. It is of course tempting from a corporate point of view to keep all of these functions together in a super security app. However each function has a specific user group in mind. A suite of mini apps could be created with the name of each app reflecting the function or user role.

Subdividing mature multifunctional apps into generation 2 apps can help with making an app easy to understand and use again. The really difficult question is, when is the right time to stop developing and start subdividing?

The point of subdivision can arrive simply because of a corporate internal practical reason, being too costly to maintain for example. A more fruitful sort of subdivision can also occur as a result of a design review – led by users – and to give a new lease of apps – life.